Generation

Key idea: Language models generate text one word at a time by sampling the next word according to learned counts.

Use a pre-trained (hand-built) Bigram model A model that predicts the next word based on one previous word. This is what you build in the fundamental lessons---each row of your grid represents what can follow a single word. View in glossary to generate new text through

Weighted random sampling Choosing the next token with probability proportional to its frequency. Your dice rolls implement this---words with higher counts are more likely to be selected. View in glossary .

You will need

- your completed bigram model from Training

- a d10 (or similar) for weighted sampling

- pen and paper for jotting down the generated text

For each pair (or group) of students:

- the trained cutouts spread from Training (already laid out on a table)

- pen and paper for writing down the generated text

The Tools page has ready-to-print token cutouts for several texts if you haven’t done the Training lesson yet. Each PDF starts with a student-facing instructions page that walks groups through the matching game.

Your goal

Generate new text from your bigram language model. Stretch goal: keep going and write a whole story.

Generate new text by walking the spread: pick a starting word, find the cutouts whose previous-word box colour matches, choose one visually, and repeat using the next word you picked as your new starting point. Stretch goal: keep going and write a whole story.

Key idea

A language model proposes several possible next words along with how likely each is. Dice rolls pick among those options, and repeating the process word by word yields fluent text.

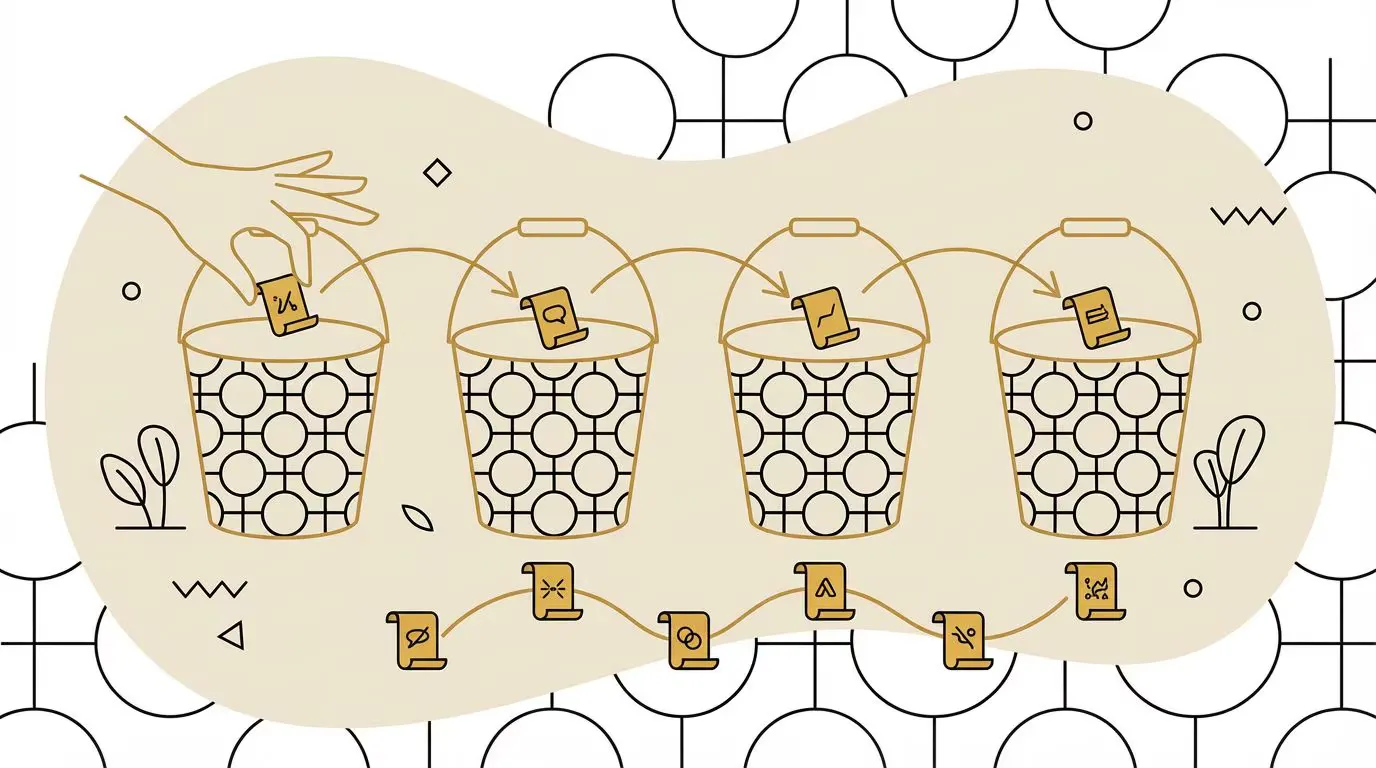

The chain grows like dominoes: write a word, find a cutout whose previous-word box matches it, write that cutout’s next word, then hunt for the next match. The spread already encodes the weights—common previous words appear on more cutouts, and your eye naturally lands on next words that appear more often. So scanning for matching cutouts and picking one visually is weighted random sampling—no dice required.

Algorithm

- Choose a starting word from the first column of your grid.

- Look at that word’s row to find all possible next words and their counts.

- Roll dice weighted by the counts (see Weighted Randomness).

- Write down the chosen word and make it your new starting word.

- Repeat from step 2 until you hit a natural stopping point (e.g.,

.) or your desired length.

It’s a colour-matching game: the colour of the word you just wrote is the colour of the previous-word box you look for next.

- Pick a starting word—choose any word that appears as a previous word on at least one cutout

- Find candidates—scan the spread for cutouts whose previous-word box matches your current word’s colour, then verify the word itself matches before committing (two unrelated words can occasionally share a colour)

- Pick one cutout—visually pick any matching cutout (your eye will tend to land on cutouts whose next words are common, because there are more of them—that’s weighted sampling for free)

- Write down the next word from the cutout you picked

- Put the cutout back in the spread (this matters: removing it would change the model’s distribution for next time)

- The word you just wrote becomes your new current word—go back to step 2

- Keep going as long as you like—if no cutouts match your current word, just pick a new starting word and carry on. Stop when you’ve generated enough text

Example

Before you try generating text yourself, work through this example to see the algorithm in action.

Using the same bigram model from the example in Training:

see | spot | run | . | jump | , | |

|---|---|---|---|---|---|---|

see | || | |||||

spot | | | | | || | |||

run | || | | | ||||

. | | | | | | | |||

jump | || | | | ||||

, | || | | | | |

- choose (for example)

seeas your starting word see(row) →spot(column); it’s the only option, so write downspotas next wordspot→run(25%),jump(25%) or,(50%); roll dice to choose- let’s say dice picks

run; write it down run→.(67%) or,(33%); roll dice to choose- let’s say dice picks

.; write it down .→see(33%),run(33%) orjump(33%); roll dice to choose- let’s say dice picks

see; write it down see→spot; it’s the only option, so write downspot… and so on

After the above steps, the generated text is “see spot run. see spot”

Using the cutouts spread from the example in Training:

| Previous word | Next words |

|---|---|

| see | |

| spot | |

| run | |

| . | |

| jump | |

| , |

- start with

see—write it down - scan for cutouts with

seeas the previous word (look for boxes insee’s colour)—both havespotas the next word, so pick one and write downspot - scan for

spotcutouts—4 matches (1run, 1jump, 2,). Pick visually. Your eye is more likely to land on a comma because there are two of them, but for variety let’s sayrun—write it down - scan for

runcutouts—3 matches (2., 1,). Two-thirds chance of.—let’s say.—write it down - scan for

.cutouts—3 matches (one each ofsee,run,jump). Let’s saysee—write it down - back to scanning for

seecutouts—both arespot, so write downspot… and so on

After the above steps, the generated text is “see spot run. see spot”

Notice how the randomness comes from your eye landing somewhere on the

spread—you don’t need dice. Previous words with more cutouts of the same

next word are more likely to produce that word: both see cutouts have

spot as the next word, so generation from see always produces spot.

Optional extension: pre-grouped piles

If you grouped the cutouts into piles during training (see the Training lesson’s optional extension), generation becomes faster: step 2 is now “find the pile labelled with my current word” instead of scanning the whole table. The behaviour and the resulting output distribution are unchanged.

Instructor notes

Icebreaker question

Before walking students through the algorithm, ask:

- in as much detail as you can, explain what happens after typing something into the ChatGPT OpenAI's chatbot, and probably the most well-known LLM product. On this site we often use "ChatGPT" as shorthand for any modern LLM chatbot---the concepts apply equally to Claude, Gemini, DeepSeek and others. The underlying principles are the same regardless of which product you use. View in glossary prompt box to produce the answer you get back

This surfaces students’ existing mental model of generation before they perform it by hand.

Discussion questions

- how does the starting word affect your generated text?

- why does the text sometimes get stuck in loops?

- if this is a bigram (i.e. 2-gram) model, how would a unigram (1-gram) model work?

- how could you make generation less repetitive?

- does the generated text capture the style of your training text?

- how does the starting word affect your generated text?

- why might the text sometimes get stuck repeating the same pattern?

- what happens when only one cutout matches your current word?

- why do we leave the cutouts on the table after picking one?

- does the generated text sound like the original training text?

Troubleshooting

- “Every row only has one tally mark—there’s nothing to roll for.” If the group didn’t get very far in Training and no row has more than one tally mark, the generation algorithm won’t be very interesting—there will only ever be one option for the next word and they’ll be stuck on rails. In this case, either encourage them to go back and do a bit more training, or just have them add some extra tally marks to the grid wherever they like. This isn’t as much like cheating as it might seem—it’s really just an example of using Synthetic Data.

- “I landed on a word that doesn’t have its own row.” This can happen if a word only ever appeared as the last word in the training text—it has a column (other words lead to it) but no row (it never leads to anything). Just pick any other word that does have a row and continue from there.

- “We’re stuck in a loop.” With small models it’s common to bounce between

two words that only point at each other (e.g.

,→spot→,→spot→ …). Try picking a different starting word, or just choose any other valid next word to break out of the cycle.

- “Every previous word only has one matching cutout—there’s nothing random about this.” If the spread is small and each previous word only matches a single cutout, generation will feel deterministic. Either go back and train on more text, or add a few extra cutouts (writing them out by hand is fine). This isn’t really cheating—it’s an example of Synthetic Data.

- “I picked a next word that doesn’t appear as a previous word anywhere on the table.” This can happen if a word only ever appeared as the last word in the training text—it shows up as a next word but never as a previous one. Just pick a different starting word that does appear on the spread and continue from there.

- “We keep going back and forth between the same two words.” With small spreads it’s common to get stuck in a loop where two words keep pointing to each other. Try starting from a different word, or just pick any other matching cutout to break the cycle.

Connection to current LLMs

This generation process is identical to how current LLMs produce text:

- sequential generation: both generate one word at a time

- probabilistic sampling: both use weighted random selection (exactly like your dice or cutouts spread)

- Probability distribution A set of options with associated likelihoods. In your model, the counts in a row (or matching cutouts in the spread) form a probability distribution over possible next words. View in glossary : neural network outputs probabilities for all 50,000+ possible next tokens

- no planning: neither looks ahead—just picks the next word

- variability: same Prompt The input text you give to a language model. In a bigram model, the "prompt" is just the single current word used to predict what comes next. In modern LLMs, a prompt can be hundreds of thousands or even millions of tokens long, giving the model much more context to work with. View in glossary can produce different outputs due to randomness

The fact: sophisticated AI responses emerge from this simple process repeated thousands of times. Your paper model demonstrates that language generation is fundamentally about sampling from learned probability distributions. The randomness is why LLMs give different responses to the same prompt and why language models can be creative rather than repetitive. These physical sampling methods demonstrate the exact mathematical operation happening billions of times per second inside modern language models.

Note: in AI/ML more broadly, this process of using a trained model to produce outputs is commonly called “ Inference Using a trained model to produce outputs. In language models, inference means generating text. These lessons say "generation" because that better describes what language models do, but "inference" is the term you'll find in AI/ML literature and tooling. View in glossary ”—you may encounter this term in other contexts. In these teaching resources we use “generation” specifically because it more clearly describes what language models do: they generate text.

Comparison to dice method

The cutouts spread and the dice method produce equivalent results:

- dice rolls with weighted probabilities select from options based on counts

- visual selection from the spread selects from options where counts are represented by multiple physical cutouts

- the spread makes the probability tangible—if three of the cutouts with

theas the previous word havecatas their next word and one hasdog, you’ll land oncatabout 75% of the time, just like weighted dice would

The cutouts method avoids the need to calculate percentages or understand dice mechanics, making it more accessible for younger learners.

Interactive widget

Step through the generation process at your own pace. Click on a row to select a starting word, then press Play or Step to watch the dice roll and text being generated. You can also edit the training text to create your own model.

Step through the generation process at your own pace. Click on a cutout to select a starting word, then press Play or Step to watch matching cutouts being picked and text being generated. You can also edit the training text to create your own model.